A couple of years ago, “AI-generated image” was a phrase that came with a knowing look: everyone understood it meant something that was impressive in theory but identifiable at a glance. There were wrong fingers, strange lighting, and text that looked like it had been written by someone who’d never seen an alphabet. That era is over.

The quality leap in image generation models between 2024 and 2026 has been genuinely dramatic, and the tools available to creators, marketers, and designers right now bear almost no resemblance to what was available even eighteen months ago. Here’s a look at what’s actually changed and what the major breakthroughs mean in practice.

Table of Contents

ToggleWhat’s Actually Breaking Through

Let’s break down the key advancements that are pushing image generation models to a whole new level.

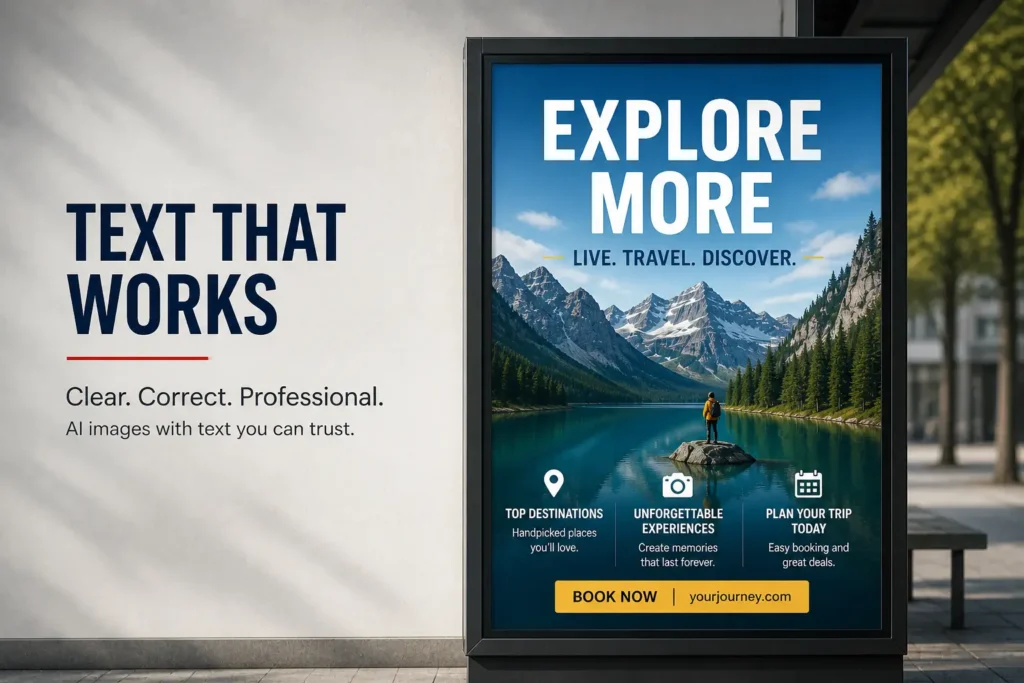

Text Rendering: The Problem That Finally Got Solved

For years, getting legible, correctly spelled text inside an AI-generated image was essentially impossible. You must have definitely seen logos with garbled letters, posters with nonsense words, and thumbnails that looked like a fever dream. It was the limitation keeping AI image tools out of professional workflows.

The 2025 generation of models broke through this convincingly. Legible multi-word text in complex compositions is now reliably achievable. Poster copy, UI strings, multi-panel comics with readable dialogue are consistently usable rather than occasionally acceptable.

Tiny fonts and text wrapped around curved objects still have weak spots, but the baseline has shifted dramatically.

Character Consistency Across Generations

Generate the same person twice in early AI image tools and you’d get two different people. For creators building visual series, branded content, or story-driven output, this made AI image generation fundamentally unusable for anything requiring continuity.

The models released through 2025 addressed this directly. Subject reference features where you supply multiple images of the same character from different angles now allow creators to maintain facial features, clothing, lighting, and overall visual identity across many generations.

Photorealism Without the “AI Look”

The plasticky, over-smoothed quality that made early AI images immediately recognizable has largely disappeared from the leading models. The new generation of image generators produces textures, skin tones, material surfaces, and lighting behavior that now pass scrutiny at a level early tools simply couldn’t achieve.

The shift is driven by advances in how models understand and simulate real-world physics. Light falloff, shadow transitions, material reflectivity, etc, now behave with internal consistency across the frame.

Individual fur strands, fabric weaves, water droplets, and architectural details all render with a level of naturalistic specificity that previously required specialist CGI tools.

Speed and Resolution as Standard, Not Premium

In early generations of AI image tools, high resolution and fast generation were expensive edge cases. If you remember, you paid significantly more for if you needed either. That framing has flipped.

2K and 4K output is now standard across the leading models rather than a premium tier feature. Generation times that once measured in minutes now routinely run in seconds.

This matters practically because it changes how iteration works. When a generation takes thirty seconds and costs fractionally more than a cheap stock photo, creators can explore dozens of variations, test different creative directions, and find what actually works rather than committing to an early output because regenerating costs too much time or money.

The Models Leading the Way

Now that we’ve looked at what’s changing, let’s take a closer look at the models that are actually driving this progress forward.

Imagen 4 Ultra

Google DeepMind’s Imagen 4 family, launched in mid-2025, offers a three-tier structure (Fast, Standard, and Ultra), each optimized for different points on the speed-quality-cost spectrum. Imagen 4 Ultra sits at the top, and its defining characteristic is something the industry had been chasing for years: reliable alignment between what you describe and what actually appears.

Where many models freely interpret prompts, Imagen 4 Ultra follows complex, detailed instructions with genuine fidelity. For instance, multi-panel comics with specific layouts and dialogue, vintage postcards with specific landmarks, photographic portraits with precise lighting conditions. Both Imagen 4 and Imagen 4 Ultra support up to 2K resolution.

GPT-4o Image Generation

OpenAI’s integration of image generation natively into GPT-4o represented a different kind of breakthrough. It is less about raw image quality than about what becomes possible when a model with genuine world knowledge generates images.

When the model generating the image understands context, spatial relationships, and design logic, outputs are more contextually accurate and compositionally coherent rather than aesthetically generic.

The practical result is a model that excels at following complex prompts precisely, rendering text accurately, and integrating seamlessly with ChatGPT’s conversational context.

FLUX.2

Black Forest Labs released FLUX.2 in November 2025 and immediately changed the conversation around what open-source image generation can deliver.

Built on a Multimodal Diffusion Transformer architecture with 12 billion parameters, FLUX.2 produces prompt adherence, photorealism, and text rendering that ranks competitively against major proprietary models in independent benchmarks.

FLUX.2’s most practically significant features is multi-reference image input: supply multiple reference images and it changes background, lighting, or pose without altering a character’s face or a product’s design.

The Bottom Line

The breakthroughs in image generation right now are not primarily about aesthetic improvement anymore. Instead, they’re about reliability, precision, and professional usability. Text renders correctly. Characters stay consistent. Photorealism is genuine, not approximate.

The gap between what these tools could do eighteen months ago and what they can do today isn’t a matter of degree. It’s a different category of output entirely!